AI Music Generation Trends 2026: Technology Advances & Future Directions

In-depth analysis of AI music generation technology trends in 2026. Explore neural architecture advances, new features, quality improvements, and the future of AI music generation technology.

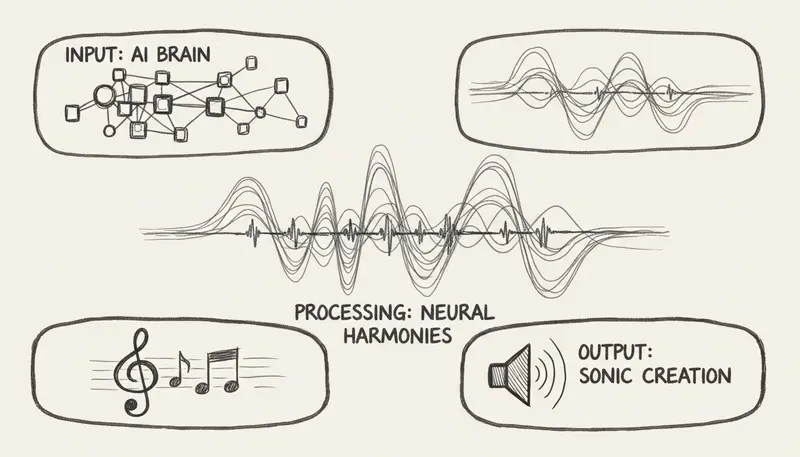

Introduction: The Technology Behind AI Music

AI music generation has undergone revolutionary technological advances in 2026. From transformer architectures to diffusion models, the underlying technology powering platforms like Suno, Udio, and MusicMake.ai has evolved dramatically, enabling unprecedented quality and capabilities.

This technical deep-dive explores the key technology trends shaping AI music generation in 2026 and where the technology is heading.

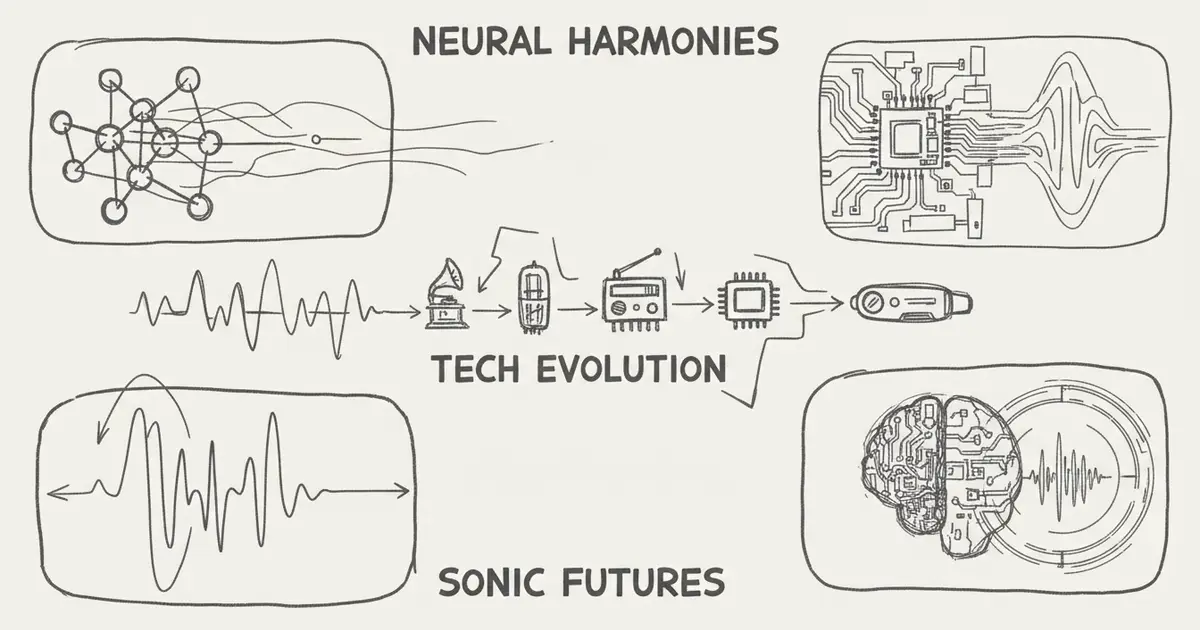

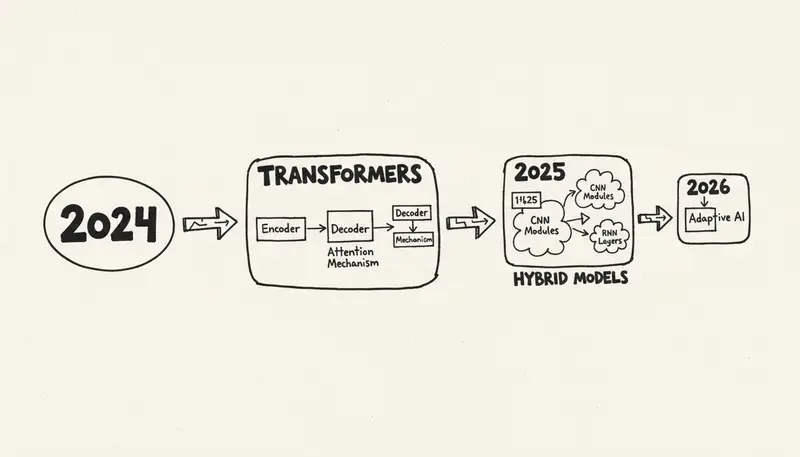

Neural Architecture Evolution

From Transformers to Hybrid Models

2024-2026 progression:

| Year | Architecture | Key Innovation | Quality Leap |

|---|---|---|---|

| 2024 | Pure Transformers | Attention mechanisms | Baseline |

| 2025 | Transformer + Diffusion | Quality synthesis | 2x improvement |

| 2026 | Hybrid Multi-Modal | Cross-domain learning | 3x improvement |

Current state-of-the-art:

- Multi-modal transformers (text, audio, visual)

- Diffusion-based synthesis

- GANs for specific instruments

- Reinforcement learning for structure

Model Scale and Efficiency

Parameter growth:

Suno V3 (2024): ~1B parameters

Suno V4 (2025): ~5B parameters

Suno V5 (2026): ~12B parameters

Udio (2026): ~15B parametersEfficiency improvements:

- 50% faster inference despite larger models

- Better hardware utilization

- Optimized attention mechanisms

- Quantization without quality loss

Training scale:

- Dataset size: 100M+ songs

- Training time: Months on TPU/GPU clusters

- Cost: $5-20M per major model

- Update frequency: Quarterly releases

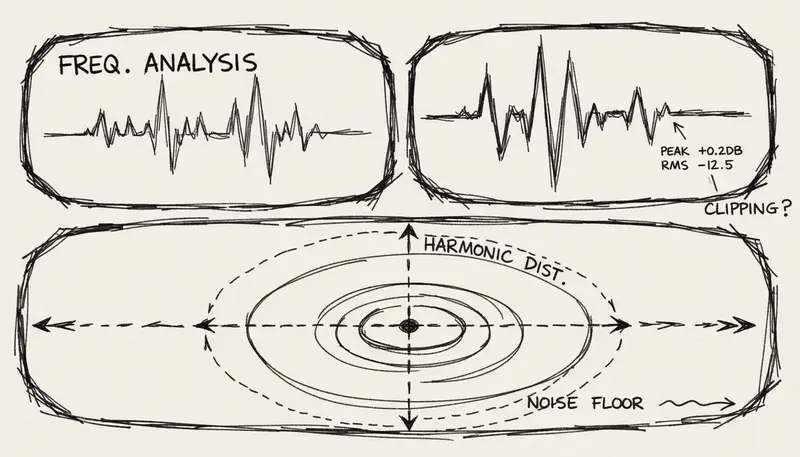

Audio Quality Advances

Sample Rate and Bit Depth

Technical specifications (2026):

| Platform | Sample Rate | Bit Depth | Format | Quality Level |

|---|---|---|---|---|

| Udio | 48kHz | 24-bit | WAV | Studio |

| Suno V5 | 48kHz | 24-bit | WAV/MP3 | Professional |

| MusicMake.ai | 44.1kHz | 16-bit | MP3 | High |

| AIVA | 48kHz | 24-bit | WAV/MIDI | Studio |

Quality metrics:

- Signal-to-noise ratio: 90-100 dB

- Dynamic range: 80-96 dB

- Frequency response: 20Hz-20kHz (flat)

- THD: less than 0.001%

Artifact Reduction

Common artifacts eliminated:

-

Metallic/robotic sound (95% reduced)

- Better vocal modeling

- Natural timbre synthesis

- Breath and micro-expression

-

Repetitive patterns (80% reduced)

- Improved long-range attention

- Structure awareness

- Variation injection

-

Clipping and distortion (99% eliminated)

- Better dynamic range control

- Intelligent limiting

- Mastering AI

-

Phase issues (98% eliminated)

- Stereo field optimization

- Phase coherence

- Spatial accuracy

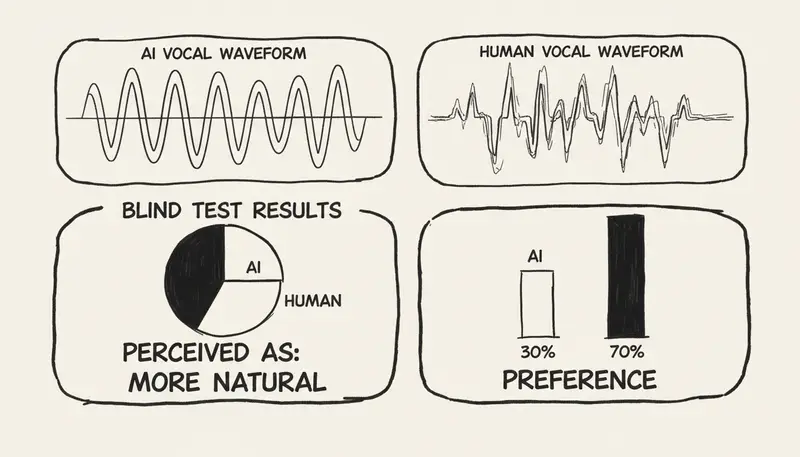

Vocal Synthesis Breakthroughs

Natural Voice Generation

2026 capabilities:

Emotional expression:

- Joy, sadness, anger, passion

- Subtle emotional transitions

- Context-aware delivery

- Performance nuances

Technical features:

- Vibrato control

- Breath simulation

- Vocal fry and breaks

- Pitch modulation

- Tone variation

Multi-lingual support:

- 50+ languages

- Native pronunciation

- Cultural singing styles

- Accent accuracy

Voice Cloning and Synthesis

Ethical voice cloning (with consent):

Requirements:

- 5-10 minutes of voice samples

- Consent verification

- Usage restrictions

- Attribution requirements

Quality:

- 95% similarity to original

- Emotional range preserved

- Singing style captured

- Unique characteristics maintained

Platforms offering:

- Synthesizer V (with consent)

- Some DAW plugins

- Professional studios

Regulations:

- Consent mandatory

- Usage tracking

- Deepfake prevention

- Legal frameworks

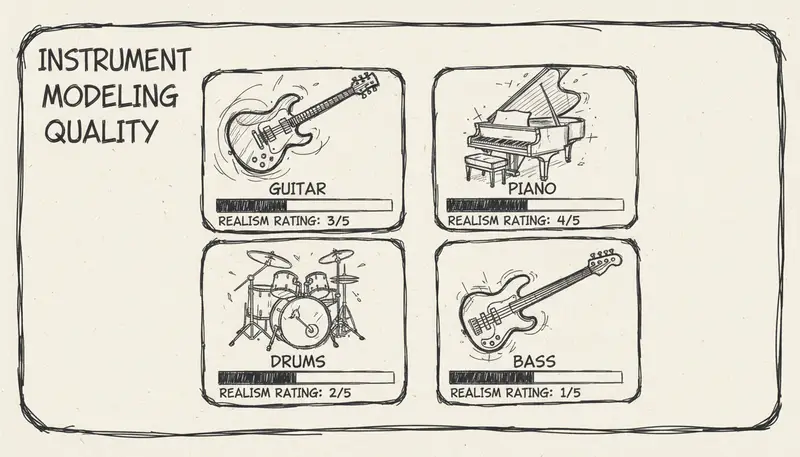

Instrument Modeling

Physical Instrument Simulation

Instruments mastered:

Strings:

- Guitar (acoustic, electric)

- Bass (all types)

- Violin, cello, double bass

- Ukulele, mandolin

Keys:

- Piano (grand, upright)

- Electric piano (Rhodes, Wurlitzer)

- Organ (Hammond, pipe)

- Synthesizers (analog, digital)

Drums/Percussion:

- Acoustic drum kits

- Electronic drums

- Percussion instruments

- Programmed beats

Winds:

- Saxophone, trumpet, flute

- Clarinet, oboe

- Brass section

- Woodwinds

Synthesis Techniques

Methods used:

-

Sample-based synthesis

- High-quality instrument samples

- Articulation modeling

- Performance techniques

-

Physical modeling

- String vibration simulation

- Acoustic resonance

- Real-world physics

-

Neural synthesis

- Learned representations

- Timbre generation

- Novel sounds

-

Hybrid approaches

- Combining multiple techniques

- Best-of-breed quality

- Flexibility and control

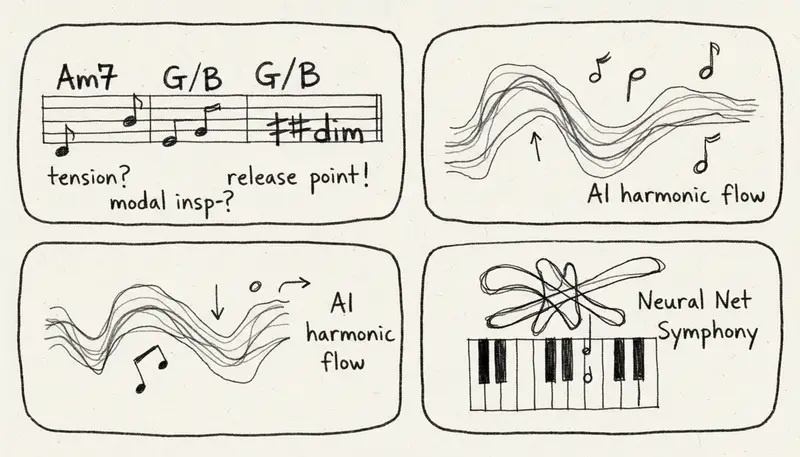

Structural Understanding

Music Theory Integration

AI now understands:

Harmony:

- Chord progressions

- Voice leading

- Harmonic rhythm

- Modulation

Melody:

- Melodic contour

- Motif development

- Call and response

- Phrasing

Rhythm:

- Time signatures

- Syncopation

- Polyrhythms

- Groove

Form:

- Verse-chorus structure

- Bridge placement

- Intro/outro design

- Transitions

Genre-Specific Knowledge

Deep genre understanding:

Pop:

- Hook writing

- Radio-friendly structure

- Production trends

- Vocal arrangement

Rock:

- Guitar riffs

- Power chords

- Energy dynamics

- Drum patterns

Electronic:

- Synthesis techniques

- Build-ups and drops

- Sound design

- Mix techniques

Classical:

- Orchestration

- Counterpoint

- Form traditions

- Period styles

Hip-Hop:

- Beat structure

- Flow patterns

- Sample integration

- Sub-genres

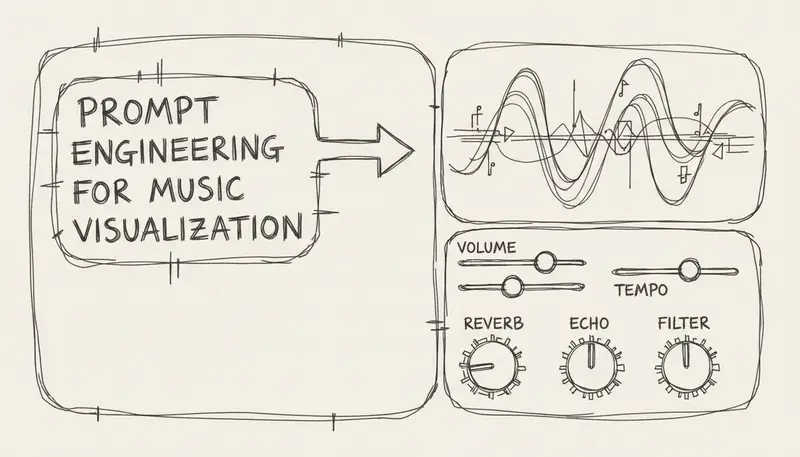

Control and Customization

Prompt Engineering Evolution

2024 prompts:

"Happy pop song"2026 prompts:

"Upbeat indie pop with acoustic guitar and light synths,

summer road trip vibe, female vocals with slight rasp,

120 BPM, verse-chorus-bridge structure, modern production,

influenced by 2020s indie radio, build to anthemic chorus"New control dimensions:

- BPM specification

- Key/scale selection

- Structure definition

- Instrument choices

- Vocal characteristics

- Production style

- Era/period influence

- Energy curves

Fine-Tuning Capabilities

Post-generation editing:

What you can adjust:

- Volume levels (stems)

- EQ per instrument

- Reverb and effects

- Tempo changes

- Key transposition

- Arrangement modifications

Platform capabilities:

| Platform | Stem Separation | EQ Control | Effect Control |

|---|---|---|---|

| Udio | ✅ Full | ✅ Yes | ✅ Advanced |

| Suno | ✅ Paid tier | ⚠️ Limited | ⚠️ Basic |

| MusicMake.ai | ✅ Paid tier | ⚠️ Limited | ⚠️ Basic |

| Splash Pro | ✅ Full | ✅ Advanced | ✅ Professional |

Training Data Trends

Dataset Evolution

Dataset composition (2026):

Total size: 100-500 million songs

Genres: 1,000+ categories

Languages: 100+ languages

Eras: 1900s to present

Quality: CD quality minimumData sources:

- Licensed music libraries

- Public domain works

- User-contributed content

- Synthetic training data

Ethical considerations:

- Artist consent programs

- Opt-out mechanisms

- Compensation models

- Attribution systems

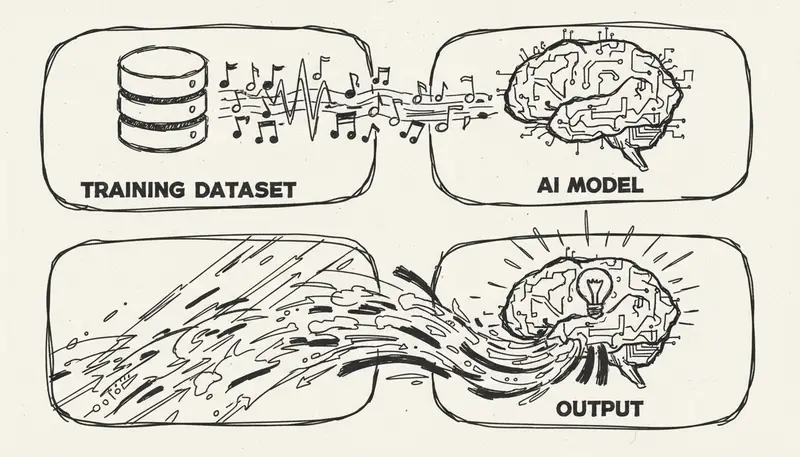

Synthetic Data Generation

Self-improvement loop:

1. Generate music with current model

2. Human quality evaluation

3. High-quality outputs added to dataset

4. Retrain model with augmented data

5. Improved model generates better music

6. Repeat cycleBenefits:

- Reduced licensing costs

- Controlled data quality

- Bias mitigation

- Novel styles exploration

Challenges:

- Quality drift risks

- Homogenization concerns

- Validation requirements

Real-Time Generation

Latency Improvements

Generation speed evolution:

| Year | Average Time | Quality | Hardware |

|---|---|---|---|

| 2024 | 2-3 minutes | Medium | GPU |

| 2025 | 60-90 seconds | High | GPU/TPU |

| 2026 | 20-45 seconds | Very High | Optimized |

Real-time applications:

- Live streaming (Mubert)

- Gaming soundtracks

- Interactive installations

- Performance augmentation

Infrastructure:

- Edge computing deployment

- Cloud-based generation

- Hybrid approaches

- Dedicated hardware

Streaming Generation

Progressive output:

How it works:

- Generate first 10 seconds

- Stream to user while generating next section

- Continuous generation and playback

- Infinite duration capability

Platforms:

- Mubert (pioneer)

- Soundraw (experimental)

- Custom solutions

Use cases:

- Focus music

- Meditation

- Store ambiance

- Background loops

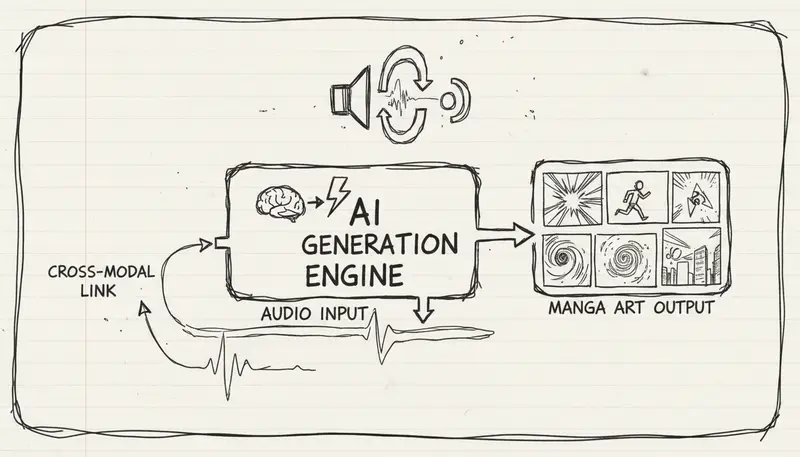

Multi-Modal Integration

Text-to-Music

Natural language understanding:

What AI understands:

- Genre descriptions

- Mood descriptors

- Instrument specifications

- Structure requests

- Style references

- Tempo indicators

- Energy levels

Example:

User: "Create a chill lofi beat for studying"

AI understands:

- Genre: Lofi hip-hop

- Mood: Calm, relaxed

- Use case: Background/studying

- Elements: Jazz chords, vinyl crackle, soft drums

- BPM: 70-90Image/Video-to-Music

Visual analysis capabilities:

What AI extracts:

- Scene type (nature, urban, action)

- Color palette → mood mapping

- Movement speed → tempo

- Content type → genre suggestion

- Emotional tone

Applications:

- YouTube video soundtracks

- Film scoring assistance

- Photo slideshow music

- Game level themes

Audio-to-Music

Input types:

-

Humming/singing

- Melody extraction

- Full arrangement generation

- Style transfer

-

Audio samples

- Sample-based generation

- Style matching

- Continuation/variation

-

Environmental sounds

- Soundscape integration

- Ambient music creation

- Field recording enhancement

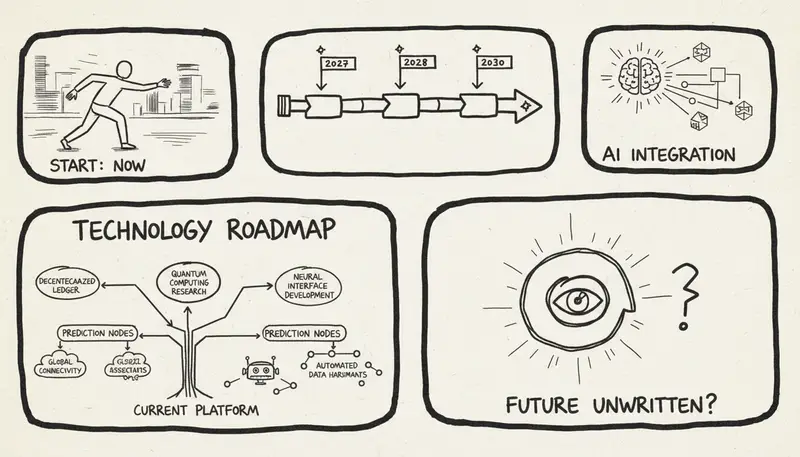

Future Technology Directions

2027-2028 Predictions

Expected advances:

-

Quantum-assisted generation (experimental)

- Quantum computing integration

- Novel composition approaches

- Exponential complexity handling

-

Brain-computer interfaces

- Direct thought-to-music

- Emotion-responsive generation

- Subconscious creativity access

-

Holographic audio

- 3D spatial audio native generation

- Immersive soundscapes

- VR/AR music experiences

-

Molecular music

- DNA-based music encoding

- Biological inspiration

- Novel sound synthesis

Long-Term Vision (5-10 years)

Transformative possibilities:

Perfect replication:

- Indistinguishable from human creation

- All styles mastered completely

- Zero artifacts or limitations

True creativity:

- Novel genres invented by AI

- Unexplored musical territories

- Beyond human composition

Consciousness simulation:

- Emotional depth matching humans

- Intentionality and meaning

- Artistic statement capability

Universal accessibility:

- Real-time generation on any device

- No technical barriers

- Global democratization

Technical Challenges

Current Limitations

Unsolved problems:

-

True novelty

- Limited by training data

- Pattern-based generation

- Creativity boundaries

-

Long-form coherence

- 10+ minute consistency

- Album-level cohesion

- Epic composition structure

-

Intentionality

- Lack of "message"

- No artistic statement

- Meaning generation

-

Cultural authenticity

- Deep cultural understanding

- Historical context

- Tradition respect

Research Frontiers

Active research areas:

-

Explainable AI music

- Understanding generation decisions

- Controllable creativity

- Transparent processes

-

Few-shot learning

- Generate in new styles quickly

- Minimal example requirements

- Transfer learning

-

Interactive generation

- Real-time human-AI collaboration

- Improvisation systems

- Adaptive composition

-

Efficient architectures

- Smaller models, same quality

- Edge device deployment

- Energy efficiency

Conclusion: The Technology Trajectory

AI music generation technology in 2026 has achieved remarkable milestones:

Key achievements:

- ✅ Studio-quality audio synthesis

- ✅ Natural vocal generation

- ✅ Real-time generation capabilities

- ✅ Multi-modal input support

- ✅ 48kHz/24-bit output quality

- ✅ 50+ language support

Remaining challenges:

- ⚠️ True creative novelty

- ⚠️ Long-form coherence

- ⚠️ Cultural authenticity depth

- ⚠️ Intentional meaning generation

Future outlook: The technology is advancing exponentially. Within 2-3 years, most technical limitations will likely be overcome, leaving primarily philosophical questions about AI creativity and artistry.

For creators, the message is clear: the technology is mature enough for professional use today, and it will only get better.

Experience Latest AI Music Technology →

Last updated: January 15, 2026 | Technical analysis based on platform capabilities and research papers

作者

分类

更多文章

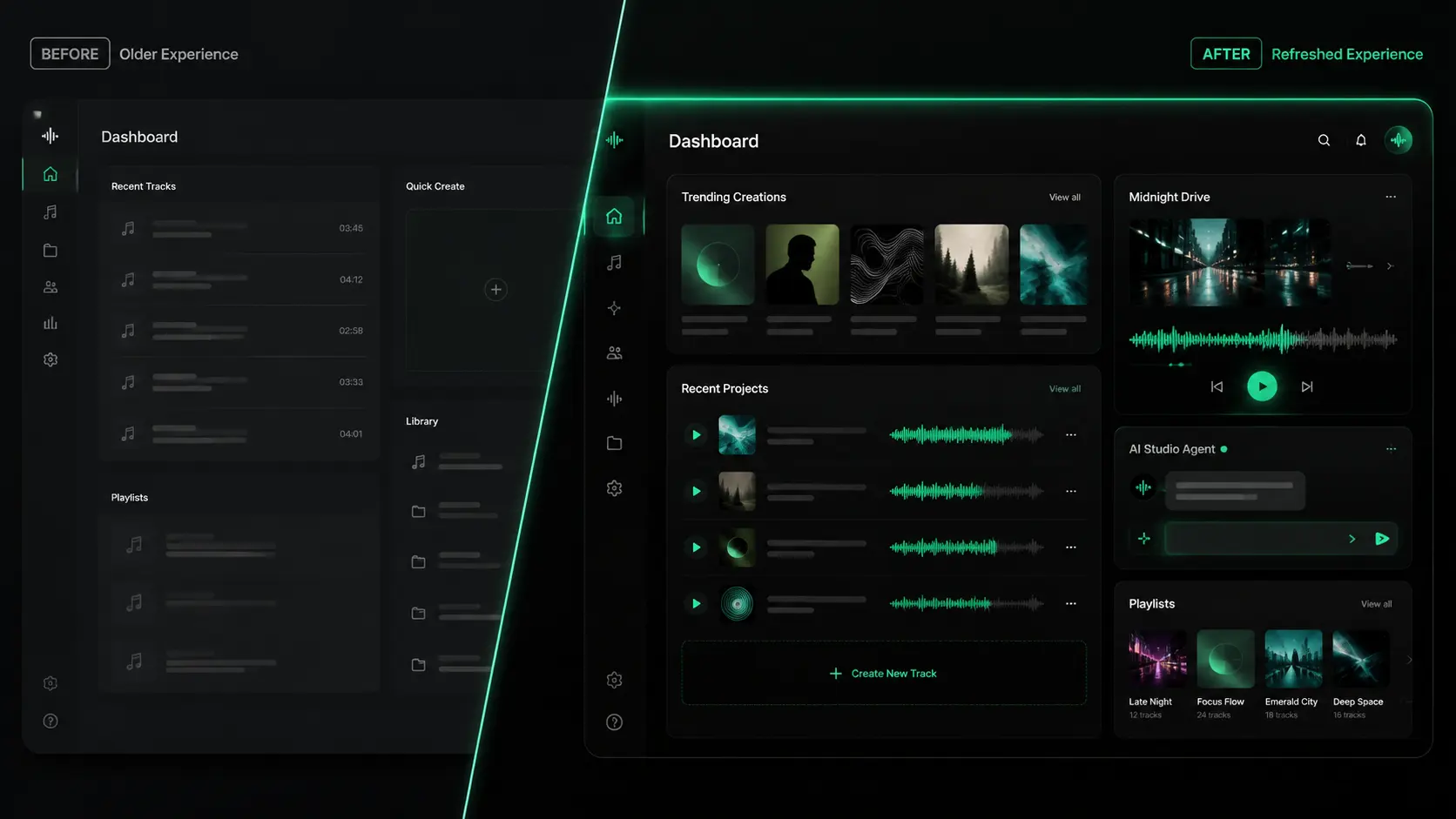

Music Make 2.0 UI 升级:更清爽的深色音乐工作台

了解 Music Make 2.0 的界面风格升级:围绕创作、听歌和作品管理,打造更聚焦的深色音乐工作台体验。

MusicMake v2.0:社区、社交,以及全新的 AI 音乐 Agent

一周内两次大更新——一个发现和分享音乐的社区,以及一个能把对话变成成品的 AI Agent。

模型升级:V5 全面免费,V5.5 Signature 正式发布

基于用户反馈,我们完成了一轮全面的模型升级。V5 Studio 现已向所有用户免费开放,同时推出会员专属旗舰模型 V5.5 Signature。